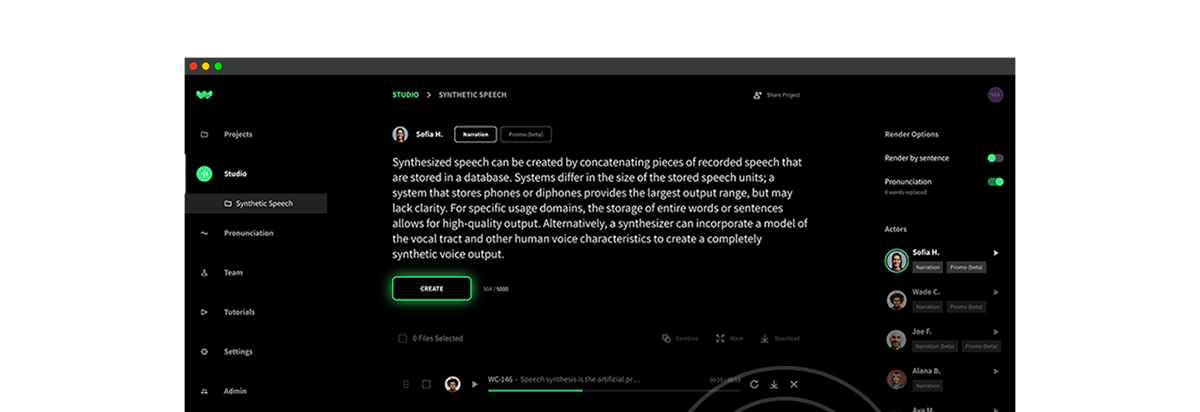

Audio by Jack C. using WellSaid Labs

In an era where our digital avatars often speak louder than our physical selves, the curtain rises on a new act, or better yet tragedy, of technological performance: deepfakes. This remarkable, yet unnerving, technological mysticism, born from the rapid advancements in AI, crafts illusions so convincingly real, they flicker dangerously with deceit.

Unfortunately this is where we find ourselves today. Yikes.

In truth, Generative AI, by nature, is a double-edged sword. It enables breathtaking realms in arts and offers unprecedented accessibility solutions. Paradoxically, it gives malicious actors a potent weapon to mislead, exploit, and coerce.

Recall a time (not so distant) where distributing a video or an image to a mass audience was a capability constrained by technology and means. The digital evolution has unforgivingly obliterated such limitations. Meaning, the potential impact is something we’ve truly never seen before.

In 2023 alone, around half a million video and voice deepfakes are projected to puncture our social media spheres. Additionally, the World Economic Forum delineates a scenario where deepfake videos surge, increasing at an almost unfathomable annual rate of 900%.

💡Learn more about WellSaid’s commitment to ethics

While the unsettling reality of this digital deception is, undeniably, something that demands our collective vigilance, the mantle of responsibility is heavy. One might argue it’s unjustly placed on the shoulders of the everyday person. And we’d have to agree. For now though, we must await more stringent protective measures, and unfortunately rely a bit more on our own judgment.

So, as we navigate through the underbelly of AI, exploring its potential and pitfalls, let’s dig deeper into understanding deepfakes—recognizing their warning signs and grappling with their ethical entanglements.

Warning sign 1: Unsettling silences

Elongated pauses often emerge as the first warning note of an audio deepfake. Attackers, veiled behind manipulated audio, must type to conjure the simulated voices of their chosen guises. It’s a process that predictably generates delays and awkward halts in conversation.

The stark unnaturalness of these pauses can be perceivable. So stay attuned to voices with an artificial timbre, strange accents, or deviate in familiar speech patterns.

💡Discover how WellSaid Labs is upholding ethical AI principles here

Warning sign 2: Urgent emotional ambush

Vijay Balasubramaniyan, a voice security maven from Pindrop, cast light upon a particularly deceptive deepfake strategy: the exploitation of urgency and emotional turmoil. Therefore, your caution should flare at unsolicited outreach, whispering tales of loved ones in jeopardy, or pressings for personal information. Especially if financial transactions lurk in the shadows.

Any communication that seems strangely misaligned with the character of the sender, or that employs high-pressure, emotionally charged tactics, should prompt you to pause, disconnect, and reestablish contact through trusted channels.

Warning sign 3: Visual glitches

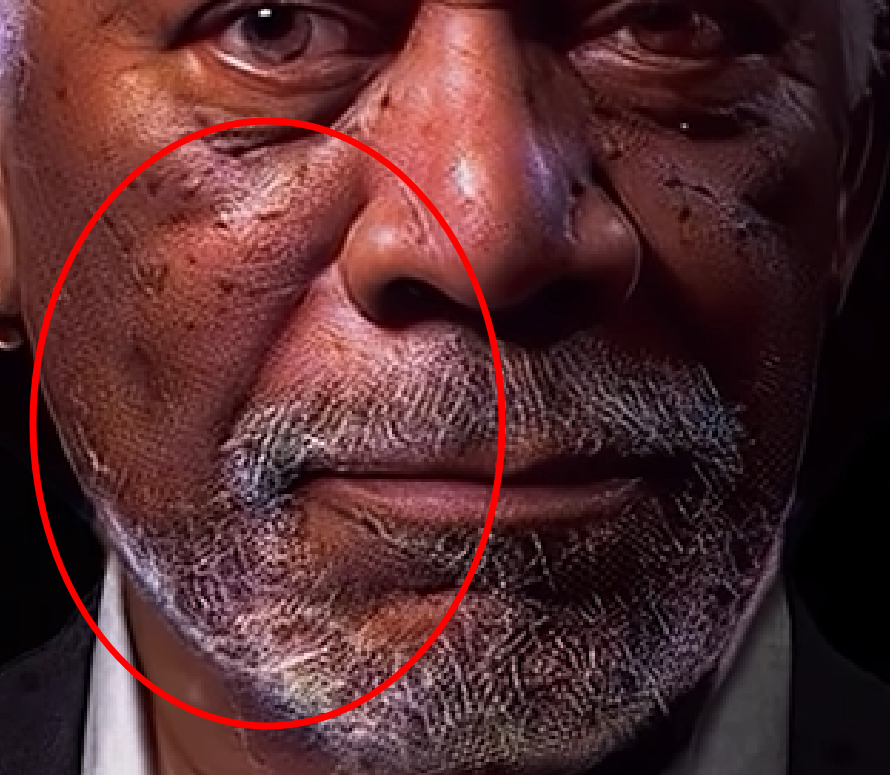

Deepfakes, especially those of lesser quality, may inadvertently expose their illusion through a mosaic of visual glitches. Be it isolated blurs, facial double-edges, fluctuating video quality, or inconsistent background and lighting. These visual aberrations tear through the facade, signaling a probable deepfake at play.

Warning sign 4: Suspicious sources

Who approached who? From where did they emerge? These are questions to mull over when the unsolicited digital specters of persons or companies materialize. Maintain a fortified skepticism—particularly when encountering potentially polarizing content involving celebrities or politicians. Ensure you scrutinize and verify the true source before accepting digital interactions at face value.

Warning sign 5: Distorted decibels

When it comes to audio deepfakes, a hodgepodge of irregularities—choppy sentences, peculiar inflections, abnormal phrasing, or incongruent background noises—can unveil their synthetic origins. Keeping your ears finely tuned to these auditory signals can enable you to discern the genuine from the counterfeit.

Warning sign 6: Unnatural movement

Deepfakes often stumble in mimicking the seamless flow of natural human movement. Anomalies such as erratic eye movements, infrequent blinking, and misaligned facial features or expressions often puncture their believability. Moreover, deepfakes traditionally struggle with accurately rendering oral visuals. So pay attention to details like individual teeth and tongue movements—their inaccurate portrayal often signals deepfake.

Warning sign 7: Inconsistent imagery

Upon encountering an image that incites suspicion, scrutinizing its finer details can reveal its true nature. Manipulated images often fumble with accurate portrayals of light and shadow, and might present peculiarities like distorted hands or inconsistent skin tones and textures across different body parts.

Image credit: Geekflare

Warning sign 8: Low quality video

Deepfakes, limited by their frame-by-frame generation, often employ minimal facial movements. Typically, they opt for predominantly front-facing visuals to maintain their illusion. Plus, they’re typically bathed in lower quality (to mask imperfections and artificially generated components). Try switching to the highest available resolution and viewing on a large screen. It very well may reveal the content’s artificial nature.

Concluding thoughts on decoding deception

In the digital arena where authenticity and artifice play an unceasing tug of war, our exploration of deepfakes exposes stark reflections of our collective technological conscience. It’s here, in this web of synthesized realities, that the ethical spirit of AI demands our undivided attention. Companies, *cough* like WellSaid Labs *cough*, staunchly uphold a banner of ethical AI practices. And with these types of solutions, you can let down your guard. Breathe a sigh of relief.

The dynamism of generative AI presents a paradox, blessing us with boundless creative possibilities while shadowing us with the looming specter of digital mirages. As such, navigating the road ahead, let us bear in mind that the art of discerning the authentic from the artificial is more than a technical skill. It’s an ethical imperative.

In the solemn words of physicist Carl Sagan, “extraordinary claims require extraordinary evidence”. It’s a mantra that aptly resonates in our era of deepfakes, prompting us to probe, validate, and champion truth amidst the cascading tides of digital duplicity.