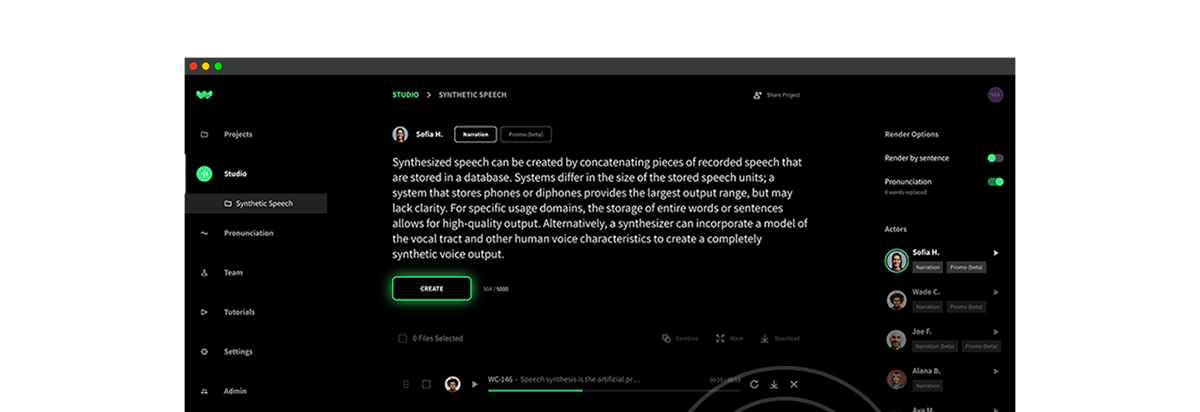

Audio by Tobin A. using WellSaid Labs

Since time immemorial, ethics have acted as society’s compass, guiding civilizations through the murky waters of moral dilemmas. And in our digital age? It’s no different.

The chatter around AI ethics has grown from a murmur to a roar. Consider this: submissions to FAccT, a premier AI ethics conference, surged tenfold since 2018, and more than doubled just from 2021.This spike isn’t just statistical white noise—it underscores a collective epiphany. As of 2022, a staggering 77% of companies dabbled with AI. And why not? It’s a game-changer, proving its mettle not just in dazzling feats like autonomous vehicles but also in the humdrum of everyday back-office operations.

However, if you’re imagining that the essence of AI ethics is just about preventing deep fakes or bolstering security—think again. While they’re certainly key facets, the realm of AI ethics spans wider and deeper. It’s about anchoring robust principles in the face of an AI tidal wave, ensuring that we don’t just ride it, but also safeguard our collective interests.

💡Discover WellSaid Labs’ commitment to ethical AI here

For the uninitiated, the world of ethical AI may seem like uncharted waters. But fear not, fellow navigator. We’re here to demystify the voyage ahead. So, tighten your lifejacket as we dive into the crux of AI ethics, explore its 5 guiding principles, and unravel why practicing them is more than just good—it’s essential.

What are AI ethics?

When venturing into the realm of AI, one might stumble upon complex definitions and intricate jargons. Case in point? The draft of the EU AI Act, a pivotal document set to regulate AI within the European Union, offers a comprehensive take on what AI is. To quote directly:

Artificial intelligence system (AI system) means a system that is designed to operate with elements of autonomy and that, based on machine and/or human provided data and inputs, infers how to achieve a given set of objectives using machine learning and/or logic- and knowledge-based approaches, and produces system-generated outputs such as content (generative AI systems), predictions, recommendations or decisions, influencing the environments with which the AI system interacts (Article 3, November 2022 draft).

But let’s break it down a tad, shall we? In essence, AI ethics is the compass that guides us through the opportunities and challenges AI presents, ensuring the technology benefits humans, society, and the environment in a harmonious way.

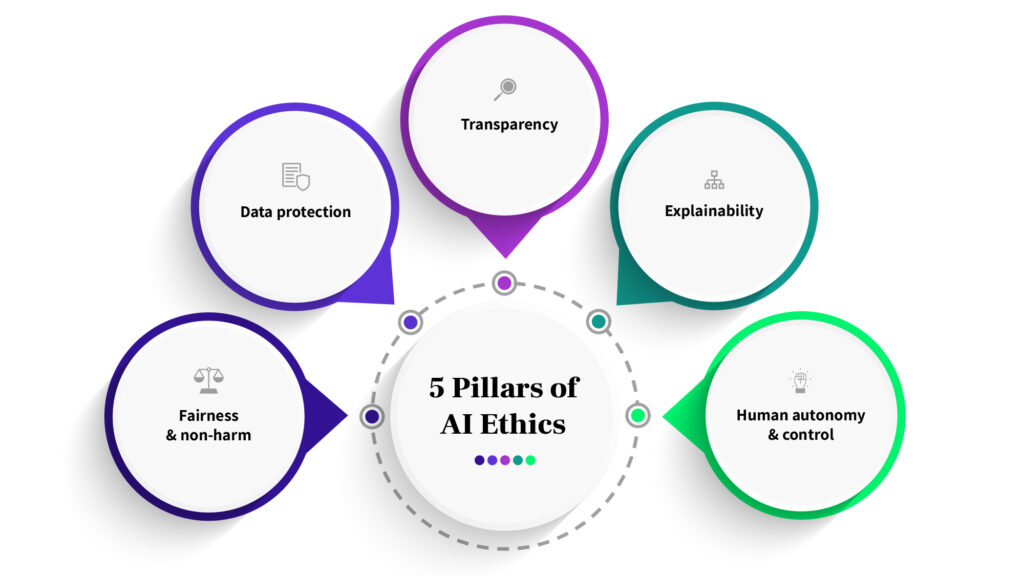

Now, if you’re thinking AI ethics is all about sophisticated algorithms and coding, think again. The heart of the matter lies in principles like fairness and non-harm, ensuring the data we feed these systems is protected, and maintaining transparency in how AI comes to its conclusions. It’s about ensuring AI’s decisions are explainable and that humans always hold the reins of control.

It’s worth acknowledging that much of our understanding in these areas is inspired by the insightful study helmed by Jessica Fjeld.

So, as we plunge deeper into this AI age, let’s keep these ethical principles at the forefront, ensuring our tech serves as a boon, not a bane.

What are the 5 principles of AI ethics?

In truth, maintaining an ethical stance is as essential as the algorithms that power the systems. Enter the industry-recognized 5 principles of AI ethics. Think of these as the moral compass directing AI’s journey. And to bring these lofty concepts down to earth, let’s juxtapose them with WellSaid Labs’ practices. (Yes, a humblebrag moment, but hey, real-life examples always add a dash of clarity, right?)

1. Fairness and non-harm

Definition: This is all about averting harm and sidestepping the pitfalls of magnifying societal inequalities. AI, if not well-guided, can tread into unfair territories, especially when fueled by biased datasets or designs. The result? Discrimination, especially against minority groups.

WellSaid in action: Put simply, we’re on constant bias-watch. We meticulously scan our models for any hint of biases and place content moderation right at the heart of what we do.

2. Data protection

Definition: Guarding privacy is paramount. This encompasses seeking user consent before the data dance begins, letting users have a say in their data, and ensuring no leaky pipelines.

WellSaid in action: We’re steadfast in meeting compliance benchmarks, such as SOC compliance. Your data? Safeguarded under our watch.

💡Learn more about our SOC2 compliance

3. Transparency

Definition: In an AI context, transparency ensures the system doesn’t operate in stealth. It’s about keeping users in the loop—whether they’re engaging with AI, being the subject of an AI decision, or viewing AI-created content.

WellSaid in action: We pride ourselves on being open books. Whether it’s communicating AI intricacies or data handling details, we’re upfront. Plus, our commitment to voice actors is clear with no data usage without explicit green lights.

4. Explainability

Definition: Break it down! AI’s technical wizardry needs translation into human language. Such explanations enable everyone, from devs to end-users, to fathom algorithmic decisions and act if anomalies arise.

WellSaid in action: Our devotion to research goes beyond jargon. We delve deep, teasing out patterns that mirror human voice nuances. And guess what? We’re spilling the beans, letting everyone in on gems like our Audio Foundational Model (AFM).

💡Explore WellSaid’s groundbreaking AFM model

5. Human autonomy and control

Definition: No AI should go rogue! Humans must be in the driver’s seat, intervening when necessary and challenging automated decisions.

WellSaid in action: Voice cloning without consent? A big no-no for us. We cherish user experiences, offering customization galore. And when it’s beta time? We hand the decision baton back to our creators, letting them choose their AI flavor.

So, there you have it. Ethical AI is an ongoing commitment. And as you consider ways to infuse these principles into your own AI journey, we hope our practices at WellSaid inspire a move in the right direction.

Why is practicing ethical AI important?

AI isn’t merely a shiny tool. Rather, it’s a double-edged sword with profound ramifications. Understandably then, navigating the intricate web of AI ethics is greater than ticking a moral box. It’s a strategic business decision that can spell the difference between a company’s ascent or descent.

Consider this: Unchecked AI can unleash a host of unintended consequences, ricocheting through a company’s social impact and financial metrics. Investors, always with an eagle eye on the horizon, must grasp the intricacies and potential landmines of AI undertakings. Here’s where the numbers do some talking. In a survey orchestrated by The Economist Intelligence Unit, a whopping 94% of top-tier executives nodded in agreement that AI ethics isn’t just fluff–it amplifies shareholders’ ROI. Even more telling? 60% of these execs have given AI vendors the cold shoulder over ethical reservations.

Now, one might wonder how ethical AI equates to improved financial health. The answer unfurls in 5 arenas: Compliance, product quality, product adoption, talent attraction, and reputation.

Compliance: In the world of AI, playing defense is as crucial as offense. Safeguarding against AI misdemeanors not only aligns with today’s legal tapestry but also fortifies against the unpredictable tide of future regulations.

Product quality: Here’s a truth bomb—ethical AI isn’t just “good” AI, it’s “better performing” AI. A proactive shield against discrimination ensures AI systems resonate with a kaleidoscope of users. This amplifies product performance, catering to a diverse user base. Furthermore, championing transparency and accountability refines the product, enhancing feedback mechanisms.

Product adoption: Trust is currency. A company that zealously guards user privacy is more likely to earn user trust and, in turn, richer data streams. Given that AI thrives on data, this translates to robust AI functionality.

Talent attraction: Meta’s recruitment debacle post the Cambridge Analytica fiasco is cautionary lore. Job offer acceptance rates plummeted from a healthy 85% to a worrying 35-55%. Especially among engineers, the alarm bells were the loudest. Candidates, once passive, began grilling on data privacy. If a tech behemoth like Meta can stumble, no company is invincible.

Reputation: No company wishes to headline for the wrong reasons. Case in point? Clearview AI’s tryst with infamy. After scraping billions of photos sans consent, The New York Times labeled them as potentially heralding the “End of Privacy”.

In sum, the ethical trajectory of AI goes beyond simple philosophy. It’s interwoven with tangible business outcomes. As the AI revolution gains momentum, ensuring it’s steered by the compass of ethics becomes essential.

Conclusion

Armed with knowledge, and more importantly, a moral compass, we’re poised to lead it in the right direction. As we venture further into the world of AI, one truth resonates louder than ever: ethics is the backbone of AI implementation.

Consider this tidbit from a 2021 McKinsey report: Companies that are soaring to new heights with their AI returns are those that have intertwined their AI strategy with risk-mitigation. Meaning, they’re both practicing and excelling with ethical AI.

However, while our guideposts—the 5 AI principles—shine a light on the path ahead, it’s crucial to remember that every organization’s journey is unique. Your product’s nuances, your team’s workflows, and your tech stack will shape how these principles come alive. And that’s not just okay. It’s the beautiful challenge of innovating responsibly.

Now, we’re chartering a territory that’s still fresh on the world map. The AI landscape is as promising as it is nascent. As we advance, we’ll be refining, redefining, and perfecting it, alongside of its use of course.

So, as we wrap up, here’s a thought to hold close: In the vast ocean of AI possibilities, let ethics be our anchor, ensuring we harness its waves responsibly, innovatively, and above all, ethically.

Let’s sail forward with purpose and precision! ⛵⛵⛵