There are more sources for artificial intelligence news every day. As the topic becomes trendy, where is a reliable source of information? Emerj is trusted by leaders at Fortune 500 companies for research and guidance on AI. WellSaid CRO, Martín Ramírez, was a guest on the Emerj podcast to discuss AI Voice.

Listen to the Podcast

In this half hour discussion, Matt DeMello and Martin discuss the potential of AI voice in the Financial Services sector. They expand this discussion to apply to multiple enterprise use cases. This spans from customer service portals, to sonic branding, and beyond.

Listen on Soundcloud

What Emerj Said About the Session:

Today’s guest is Martín Ramírez, Head of Growth at WellSaid, a company specializing in AI-driven voice-over tools. There are surprising use cases for these capabilities across various industries.

In conversation with Emerj Senior Editor Matthew DeMello on the ‘AI in Financial Services’ services podcast, Martín focuses on enterprise applications.

From learning and training to supplementing conversational AI, Martín describes what is necessary to return enterprise-wide results from truly transformational use cases.

LEARN MORE: Generative AI Map from Sequoia Capital

Later, they discuss ways that information must transition from print to digital and, in an increasing number of multimedia workflows, from digital to audio.

More About Emerj Podcasts

Emerj releases a podcast episode weekly, interviewing leaders in different artificial intelligence companies. In addition to the Financial Sector podcast, there is also an AI Business and AI Consulting podcast.

How Emerj Describes the Financial Sector Podcast:

Stay ahead of the curve as artificial intelligence disrupts the financial services sector.

Discover the lessons learned from organizations like HSBC, Citigroup, and Visa; learn business strategies from venture capitalists investing in AI for the financial services industry; and see the future with AI banking innovators from Silicon Valley and around the world.

Each week Emerj Founder Daniel Faggella interviews top experts to bring their best insights to our global audience. Each episode draws from the unique perspective of our guests,and the data and insights from our market research on the impact of AI in financial services.

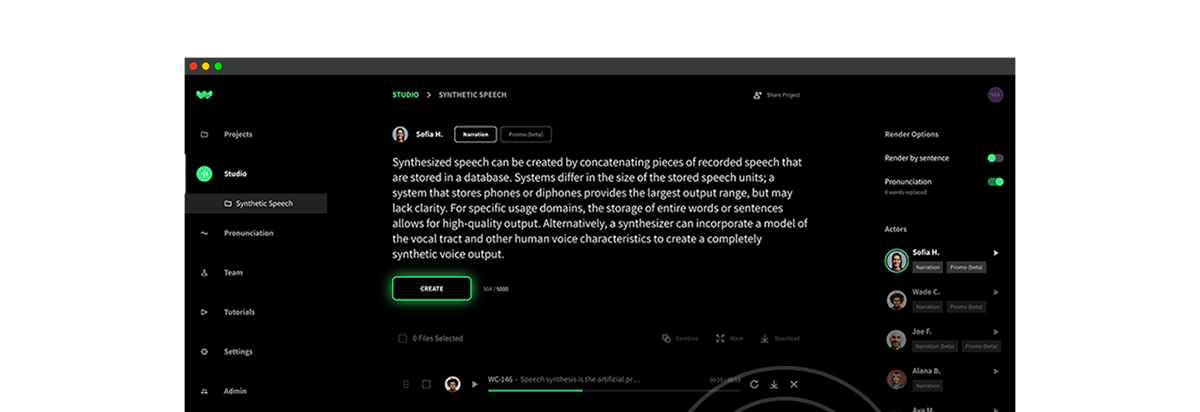

Learn more about how other companies are using WellSaid for the best text-to-speech API.

Transcript

Matt DeMello: [00:00:00] Welcome everyone to the AI and Financial Services podcast. I am your host, Matthew DeMello, senior editor here at Emerj. Today’s guest is Martin Ramirez, head of Growth at WellSaid, a company specializing in AI driven voiceover tools, it is truly surprising how many applications there are for this technology and across as many industries.

Martin does a great job on today’s show, keeping use cases focused on enterprise needs and demands. Also, where we’re seeing information not only have to transition from the print to the digital space, but from print to digital and then onto audio. It’s a much wider margin than you would think, even if I’m a little biased working in podcasts.

Without further ado, here’s today’s episode.[00:01:00]

Thank you so much for being with us on the show, Martin. Excellent and very good to have you. So what we’re looking at today is the application of AI in voice for the enterprise, and we want to see from your perspective at WellSaid, what you feel enterprise leaders are looking to apply AI in voice related challenges.

What do you see as those challenges?

Martín Ramirez: Yeah, thank you Matthew. I think that’s a, that’s a very strong premise. For the longest time could have been simplified thinking about efficiencies in workflow, efficiencies in in capital deployment, or the cause of the production. And those are factors that remain very important to the enterprise and leaders who are managing teams of collaborators, building content that gets to be expressed and published through.[00:02:00]

But I think there is a layer on top of those efficiencies that is more important and continues to increase in importance, which is the quality of those productions. One of the things that that we’ve learned working with fortune hundred companies and other customers is the sensitivity that their listeners have in how aggressive, for lack of a better term, their perception of quality.

So we are now in a world where even internal stories that organizations have to tell to their people must be delivered without sacrificing quality. for the sake of any of those efficiencies that we might have leveraged in the past. Mm-hmm. as justification to build with technologies like ours, for example.

So thinking about, yes, how can we leverage the power of technology and all this computational power to generate more content, more [00:03:00] efficiently without sacrificing quality becomes the name of the game from what we’ve seen over the past few years.

Matt DeMello: Very, very interesting stuff. And also, I know there’s an incorporation for the sense of like quality control and also the kind of the spread of the product that you’re also integrating, like the, the work of voice actors, you know, throughout.

But I, I assume, like at the end of the day, this is just like the, you know, you’re, you’re recording with them in order to solve workflow related problems. Just with, I mean, there’s, there’s a zillion efficiencies just. The amount of wherewithal it takes from an organization standpoint to solicit like a voiceover recording from a traditional actor.

I just say that from experience. I’ve, I’ve made professional podcasts for 10 years now, and I’ve worked in voiceover work for narrative podcasts. That’s a huge form of thought leadership we’re seeing in a lot of these spaces, but, If you wanna talk about that potential use case or others, you, you kind of see around that.

I know learning and training has to be another big [00:04:00] one.

Martín Ramirez: Yeah. And, and I think you, you touched on something that is integral to the health of our ecosystem and it’s the voice talent, the voice actor and the collaboration and relationship we have with them. It all begins from the voice of a real individual and they are incentivized.

They get to monetize the publishing of content that happens through our. And most importantly, we also serve as a marketplace for them to service use cases that are not humanly possible. And let me give you an example. Think that you are a global retailer with millions of products running campaigns in parallel with different values and attributes specific to different regions.

You might have the same ad copy for a 30 second. Right. An audio add in this case with the specifics of the location or personalization of that particular ad copy within the territory is going to be expressed [00:05:00] or published. Now, you can use the lightness of yourself. For example, if we were to create a synthetic voice using our AI of Matthew, now the retailer can use your likeness.

You get to monetize from the production. That advertising, and you can run thousands of permutations in seconds. So the same ad can be propagated with the same sonic brand, in the same sonic identity. And that’s, that’s the power that we are able to, to harness by. Yes, we do work with real people. They are the, they are the essence.

We work with you as a voice talent to declare the personality of an ai. , we work with you to determine and agreed upon which type of content you want created with your likeness. It could be a one-on-one replica of your likeness, or you could be acting out a particular character that could be used in a production, right?

But thinking about that type of work where sending an individual [00:06:00] to a booth when that person could be chasing more aligned creative. , but now they can still monetize from the 4,000 permutations of the same addon insert top drink here, if you will. Right. So that’s, that’s one things that we see a lot of adoption.

And to your point as well, L and d learning and development is, it’s a very interesting space because. You are looking at at, at organizations that have a lot of complex information, not only with regards to their HR policies, we’re talking about cybersecurity training. We’re talking about safety at the workplace, you might have pharmaceuticals with new drugs and and manufacturing processes that are highly regulated.

And all of this content is constantly being updated. This is literally evergreen content, right? And there are times that just a fraction. Of a training module has to be updated. Without a technology like ours [00:07:00] or similar to what we do, you will have to recreate the whole training module and or find the very same voice actor to do the ADR split, insert the new piece of copy and replace a training module with a programmatic approach.

you can literally find the timestamp that you need to replace with the new information re-render with the ai. It preserves the same likeness as the original piece of content and now you have updated copy for your voiceover for that particular training module. So I just kind of like pepper there a little bit between consumer space looks like, and in the case of advertising and a traditional use case that we see a lot with learning and development organizations.

Matt DeMello: No, no, no. That makes perfect sense. And I’ll also say that’s probably a really great way to segue into where we probably see the more relevant applications of AI with even outside of the respect to the voice, and I’m sure we can get into that, but probably of more relevance to [00:08:00] our audience is how are you’re using legacy systems, data lakes, you know, an organizational repository of data.

In which to inform what that voice says in trainings and how that gets updated, you know, seamlessly, those, those sorts of solutions. Can you speak to kind of the enterprise applications we’re seeing in AI in those areas?

Martín Ramirez: Yeah, certainly. And, and I think it’s there, there is a balance and at least I can speak from, from our approach and the way that we’ve seen the enterprise deploying and adopting our tech.

Our kernel or, or, or our central models, if you will have the capability on its given language to speak any word imaginable within that domain. There are certainly some edge cases, if we may call them edge cases where, and I’m going back to that pharmaceutical example. [00:09:00] The terminology is highly complex.

You might have some drug names, you might have some chemical process or, or a manufacturing process that is not in the everyday lingo, if you will, by plugging our api, for example. And, and, and Matthew, do let me know if I’m getting too product-centric here.

Matt DeMello: No, you’re fine. Plug, no, no, go for it.

Martin Ramirez: One of the things that we can do is you can influence the training models by providing specific.

To the given domain or subject matter expertise that you are intending to use one of our avatars for all that will do is increase the likelihood that when a new, highly complex chemical name or drug name is being rendered, the AI will say correctly for the first time. But the payload of sorts on our customers to be able to use our generative AI to create voiceover is very,

Our voices and our AI models are [00:10:00] pretty strong. The augmentation of that dataset to increase the lexicon of an AI avatar isn’t nice to have, to be very honest. But the more data we have, the higher fidelity. If you have data lakes, if you have data banks of information that at some point you think will influence any sort of narration that you expect to do with an AI voice.

we will take it and we will use it as part of training data, right? As an augmentation, not necessarily as a hard requirement for you to be successful using this technology, if that makes sense.

Matt DeMello: Oh, it absolutely does. Also, that you peppered in. A few mentions of use cases, just in answering that last question specifically with life sciences and drugs that I think that was a very clear picture to draw.

I’m also very interested to know where you see the crossover in what you guys do. There’s so much of a, of a focus in financial services and voice. You know, whether that’s the call center, whether that’s having any sort of interaction where you can glean [00:11:00] what the customer views. the company brand and how, you know, how, how great an experience that they’re having in their systems.

You know, and these are, aren’t just like idle applications of the word voice. And yes, it does have a larger context outside of just vocal chords, of course, but yeah. Yeah. I, I, if you can speak to, you know, the potential crossovers here with, you know, conversation agents mm-hmm. and other things we’re seeing in financial services.

Martín Ramirez: Got, yeah, certainly. And, and I’ll give you, An A and B side. B side being a little bit on the blue sky thinking perspective, but it’s, it’s approaching and it’s approaching fast. But let’s say for example, the traditional chatbot application or a call center where frustration in customer satisfaction are two very important metrics that a financial institution will be keeping track.

We wanna make sure that. Ushering an experience where the individual, the consumer at one end of that transaction is getting to the information she needs as soon as [00:12:00] possible. We have a partnership with Five Nine, which is in the business telephony world, and one of the things that we’ve learned throughout the collaboration with Five Nine and financial institutions or using the five nine infrastructure to build conversational experiences is any degradation in the quality of the experience that includes the.

That includes the routing. That includes getting to the answer in a certain amount of time. It is detrimental for their brand as a whole. So by being able to create personalized content that is taken into account, multiple variables within the context of the particular state in a conversation flow and the context of what the problem.

The consumer is trying to solve and generate narration that delivers that experience in audio form rather than pre-recorded prompts, which by the way, a prerecorded [00:13:00] prompt could be by a person or a legacy voice technology that ignores you and asks you to press zero 20 times in the same call because it doesn’t have the capability of saying anything relevant to what you were trying to solve.

Those components in the experience become. By having an engine that is layering upon the conversational intelligence language, deliver in a way that resonates with the individual. And that’s, that’s what’s very important. One of the things possibly tangential here, but when we work with brands in the financials industry and, and any other industries, and we’re building bespoke avatars for their.

it’s very easy to, to get hyper excited into what the voice sounds like. What’s the accent, right? What’s the implied gender? The pitch, and all of the things that you can use to describe the voice. And well said. We like to take, uh, a different [00:14:00] direction. Yes, we will get to the attributes that define the characteristics of a voice that’s needed to build it.

But we like to ask what is the listener doing? Where is she listening this from? Is she on that conversational flow calling her bank because mm-hmm , her credit card got locked. What is she attempting to accomplish? And after interacting with this voice, whatever that is, at that given point in time, what do we want her to accomplish?

What is the pre, during, and after experience that the voice is going to enable? And that’s very important because again, think about the conversational experience as a bunch of forks or decision trees that you will have even the crux of what we understand the customer is trying to get from too. And if we can take in that context and modulate the delivery and humanize that experience and create more of that connective tissue, we’re doing our job.

Our job is not simply to say things [00:15:00] programmatically. That’s kind of like years ago. That’s been there. Done that. Now is to contextualize that experience. Financial services is a great example, but any brand that has an understanding of the importance of sonic identity will be looking for not only I want to direct my customer in the context of a call center example to the right path.

I also want what they listen to to be context. and ideally even personalized. So that’s very pragmatic on, on business today. Now taking a, a, a leap of faith and, and let’s go on this journey for, for a minute, where immersive experiences are not a sci-fi use case anymore. We are, we are now seeing brands across many industries that are anticipating that breaking up from the black mirror.

If you. The, the [00:16:00] smartphone creates many great experiences and, and we are reliable and dependent on those experiences today, but they are limited by the media. So when I walk into a bank, when I walk into my gym, when I pull up my threat mail, whatever the case might be, whatever that context of the experience will be, there are opportunities for more immersion if we can deliver.

another canvas, another medium, right beyond the eyes. And that’s those, those are experiences that, again, some of them are very blue sky thinking. Some of them are near sci-fi, but enterprise companies and big brands are investing heavily into figuring out what that next permutation, that next interface between competing and people will look like in the upcoming.

Matt DeMello: Yeah, and, and I mean, you can ask anybody on the conversational AI side of things if you’re [00:17:00] not doing this with kind of a big picture in mind and a real transformational change to the organization. I mean, I’d almost stop short on this podcast to say never, never do it, but that’d be pretty sound advice.

I’d say I, if I can speak outside of Emerges purview, and I’m sure we can follow this up with some, uh, language as to that in, in legalese. Just a la uh, last question here. If we can touch on the specific AI applications you’re especially seeing in those use cases for, for enterprise especially where you’re kind of pulling from a data lake to try to make really data audio based for, for lack of a better way of putting it.

Martín Ramirez: Yes. So an example that comes to mind is we have a, one of our tenants or customers is the explanation. and they built basically a search ending for children. And we’ve built [00:18:00] voices for, for their services where they have created multiple experiences like iPad, applications, websites, and the like, where actually, and my daughter’s love this software as well, can ask, right?

A question like, when did Dinosaurs inhabited the world, whatever the case might be, and they have this awesome experience. There are characters and avatars that go to a library, which is their database of highly curated answers. Contextualize for what a child who’s five years old can consume and understand and learn from, if you will.

Right. And. again, their, their magic is gathering all these questions for the context of their app and their learning experience for a child. But where we provide value to them is now that you have done all these amazing educational scaffolding and, and structuring of these non normalized data, if you have that, you have [00:19:00] all around.

What if we can help you tell this story in parallel to millions of kids who are interacting with your app and you wanna provide an engaging, interactive experience, not simply a cartoon with a chat bubble and text in it. And then again, this is a, a very close to my heart application because I love to see the girls playing with this app and it’s literally plugging into an existing data back that a subject matter expert has already organiz.

has already mapped to a given user prompt, and all we do, we become the publishing link into delivering that knowledge, that information, that data, if you will, to the particular audience. In this case, children. Another example more. More towards those our age, we have some fitness apps that are being built to train people remotely.

Some of the experiences that they provide are live streams. Trainers and [00:20:00] they’re given cues and the like, but that’s one premium offering they might have within their marketplace. They have lots of exercises, training programs, recommendations, suggestions, diet plans. Again, big data being warehoused. We think their domain that also has to be embedded into the hardware and the experiences that they are providing.

People working out at their homes. Now they need to figure out, well, how can I mimic to the best of my ability, the experience of the livestream through programmatic? And that’s again, enter well said. We then partner with them into looking at the context. It’s gonna be the beat. Are we doing a meditation exercise?

Are we doing yoga? Cooling down exercise. Are we at the beginning of the session? Are we in the middle going climbing and listening to Hard Rock and [00:21:00] trying to pump the person doing the workout, whatever the context might be, those becomes inputs that they do have already in their data banks, right? They already have this inform, contextualize that information within the experience of a workout.

Now they can plug in and call out the right well set avatar within the right in motion. That delivers that personalized experience for the individual interacting with their hardware, if that makes sense. So those are two polarizing examples of how we plug your database and deliver that to the listener.

Matt DeMello: Martin, I’ll tell you this, I appreciate the most in any presentation is range and. I think age of any kind is, can be kind of an invisible range. Anybody that can talk to, you know, and make their message around a child, into an adult, I think there’s that old adage, Einstein said, if you can’t explain it to a six year old, you might not know even know what you’re talking about.

And I think that’s a good max might take that [00:22:00] everywhere, even in a lot of enter. You know, content discussions that that we have going on. Martine, thank you so much for being with us.

Wrapping up today’s episode. I think there’s a lot to be said about the ways we’re seeing AI capabilities bifurcate in making human machine information interfaces more palatable to the human experience. Also, where we’re seeing it serve purely administrative functions for the former. We see that in the well said example Martin brought up where they apply AI tools to make their avatars more personable to the human experience, to make them look and sound more authoritative to other human beings.

Or in other words, to look more human themselves. Talk about an uncanny valley. In the latter case was where we focused the discussion of today’s episode, and I hope you got as much out of it. As I did on behalf of Daniel and the entire team here at Emerj, thanks so much for joining us today, and we’ll [00:23:00] catch you next time on the AI and Financial Services Podcast.