Not all AI voice models are created equally. While most struggle to pronounce words correctly in context, jumble up dates and numerals, and make mistakes rendering acronyms, the newest WellSaid Voice Model uses a different approach.

WellSaid Voice Model Update

Watch this webinar replay to learn from Senior Machine Learning Engineer, Rhyan Johnson, how our voice model evolved to be the most lifelike AI voice available.

She will explain how speech is rendered by a voice model and what makes the WellSaid Voice Model unique. Don’t miss this incredible insight into how WellSaid Labs is revolutionizing text-to-speech!

More About the Most Lifelike AI Voice Model

In this version of the voice model, our Voice Team had several goals. First, they wanted to improve overall pronunciation on the first render of a text. That means finding a way to read words like “live” correctly, depending on the context of the sentence.

Notice how the word “live” is pronounced differently, when used as an adjective or a verb.

Second, they wanted the Voice Model to be better at reading dates and numerals, and to know how to render “2020” (pronounced “twenty-twenty”) and $1M (pronounced out of order as “one million dollars”). They also wanted the Voice Avatars to be able to read special characters like “&” and “/”.

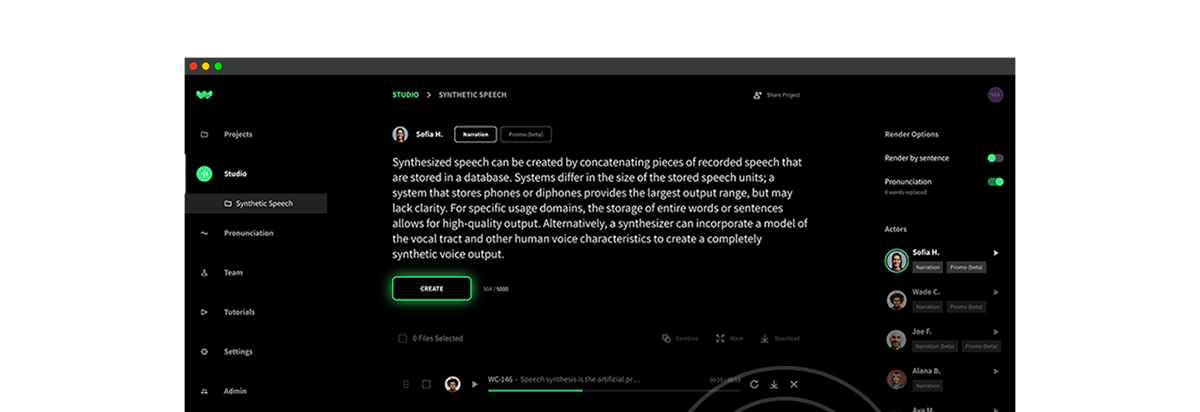

When you long into Studio, you will hear that the Voice Model update has made the avatars more accurate, predictable, and lifelike.

Respelling for Superior Speech Cues

In the webinar, Rhyan also talks about the new Respelling feature that came out in this Voice Model update. With Respelling, you can tell the Voice Avatar exactly how to pronounce an unusual word. This is especially helpful for proper nouns, difficult medical terminology, certain acronyms, and words that are borrowed from other languages in English.

READ MORE: Voice Model Update Product Release Notes

Additionally, with Respelling you can even tell the Voice Avatar which syllable should be emphasized in the pronunciation. We like to think of this as the ability to coach the avatar, just as you would a voice actor! Now, you can choose whether you’re a “poh-TAY-toh” or a “poh-TAH-toh” kind of person!

About Senior Machine Learning Engineer, Rhyan Johnson

As the Senior Voice Data and Machine Learning Engineer at WellSaid Labs, Rhyan works with the larger Voice team to build the best AI Voice Avatars. In her role, she refines the voice avatar after the Voice Model has trained on the recordings provided by the voice actor, making sure that the end result has no robotic or mechanical qualities. She is an expert in machine learning as it applies to AI voice and crafting the most lifelike synthetic voices ever.

Not a WellSaid Labs Studio or API customer? Start a no-commitment free trial to hear the latest Voice Model for yourself! Or, book a call with one of our experts to discuss your needs.